Fasten your seatbelts. The speed and power of next-generation computers will blow your mind.

By Jana Manolakos

Canada is just beginning to catch up in the global race for the bigger, better computer with the country’s network of academic supercomputers, pushing up the leaderboard of the Top500 supercomputers on the planet. And even now, as the potency of these high-performance machines is being harnessed to find answers that were previously out of reach for Canadian researchers, companies like IBM, Google, Intel and Microsoft are leaping ahead with whole new breeds – quantum and exascale computers.

Unlike classical computers, which use bits of code comprising a series of ones and zeros to process information, a quantum computer uses atoms as the bits for code, called qubits. At this quantum level, the speed and power are boosted to astronomical heights.

In 2016, IBM was the first company to put a quantum computer on the IBM Cloud. Called the IBM Quantum Experience platform, it now has 18 public and commercial systems with 240,000 registered global users, who have run hundreds of billions of circuit executions (a circuit is the basic unit of work done on a quantum computer), which has led to more than 230 third-party published research papers. IBM went commercial the following year, becoming the first company to offer commercial universal quantum computing systems via the IBM Q Network. The Q Network now connects more than 100 organizations, including Fortune 500s, startups, research labs and education institutions.

The exascale computer joins the race

Meanwhile, as the race for quantum supremacy heats up, an unexpected newcomer – the exascale computer – stepped forward last July. It’s the brainchild of Ewin Tang, a tech prodigy from the University of Texas, who developed an algorithm that enabled classical computers to solve problems at a blistering speed, as much as 60 times faster than the world’s fastest supercomputer, Summit, housed at the Oak Ridge National Lab. Summit processes a quadrillion calculations per second, and may even challenge some quantum computers. Tang’s exascale enables 1018 (a quintillion) operations per second, which still pales in comparison to a quantum computer’s 101,000 (ten trecendotrigintillion) operations per second.

So, while quantum and exascale computers – with their exponentially larger storage, memory and computational power – will one day help us understand nature better, create vaccines faster and boost machine learning and artificial intelligence, Canadian researchers are currently making the most of a national network of supercomputers.

Modern supercomputers rely on harnessing the computational power of as many as a million processors working in parallel, on the same problem. Today, Canada has five major supercomputers, part of a national advanced research computing infrastructure coordinated by Compute Canada and its regional partners, Compute Ontario, Calcul Québec, ACENET and WestGrid. Launched in 2007, Compute Canada offers a team of more than 200 experts, employed by 37 partner universities and research institutions across the country, supporting researchers with large-scale computation and simulation for artificial intelligence, climate change research, ocean modelling, genomics, astrophysics and other disciplines using big data research.

Canadian supercomputers are pushing the boundaries of knowledge

Earlier powerhouse systems – Fire at Queen’s University, McKenzie at the University of Toronto and Glacier at the University of British Columbia (UBC) – have led to today’s behemoths of computational power: Arbutus at University of Victoria, Graham at the University of Waterloo, Cedar at Simon Fraser University (SFU), Niagara at the University of Toronto, and Béluga, the most recent addition in 2019, located at the École de technologie supérieure.

Béluga makes a splash

Calcul Québec’s supercomputer, Béluga, is 300,000 times faster than the average laptop, and with 67,000 times more storage space. Located at the École de technologie supérieure in Montreal, Béluga made a splash last April when it launched with $12.8 million in funding from the Quebec government in addition to funding from the Canada Foundation for Innovation and the Fonds de recherche du Québec. It is the main high-performance computing infrastructure in Quebec. Pierre-Étienne Jacques, Chief Science Officer for Calcul Québec, notes, “First used principally in physics and chemistry, advanced research computing is now pivotal in most research areas.”

Niagara offers a rush of information

A powerful research supercomputer, Niagara, roared onto the research scene in 2018. Available to researchers of all disciplines across the country, it’s located at the University of Toronto and supported by the university’s high-performance computing division SciNet, which is open to all Canadian university researchers. According to the university’s theoretical astrophysicist, Dr. Ue-Li Pen, “The large parallel capability of Niagara enables world-leading precision cosmological simulations incorporating neutrinos. This draws talent from across the world, focuses their research strengths and brings visibility to the research results.”

Powerful computation takes root at UBC with Cedar

Launched in 2017, Cedar is housed in a data center on the university’s Burnaby campus. With more than 3.6 petaFLOPS of computing power, Cedar includes a mix of CPUs and GPUs and very fast interconnections for powerful processing and data management. SFU bioinformatics and genomics professor Fiona Brinkman relies on its capacity to lead the Integrated Rapid Infectious Disease Analysis Project, which needs sophisticated and secure computer power to understand disease outbreaks. And SFU physics professor Michel Vetterli leads research which analyzes vast amounts of particle data from CERN’s Large Hadron Collider.

A hefty beast named Graham

Unveiled in 2017 and named after the University of Waterloo’s “father of computing,” Wes Graham, the university’s supercomputer cost $17-million and contains about 16,000 kilograms of computing equipment packed into about 60 refrigerator-sized units. Graham has 50 petabytes of memory capacity or 50 billion times more than the country’s biggest computer in 1967. “It is a hefty beast,” says Scott Hopkins, a University of Waterloo chemistry professor. “If you had a calculation that might take a year to run on your desktop computer, with Graham, you might have it done by lunchtime.”

Arbutus offers cloud cover

Located at the University of Victoria, Arbutus provides Canada’s academic community with cloud computing services. Its powerful storage and computing capabilities are designed to support researchers processing, sharing and storing massive data sets. Arbutus can store the equivalent of 10 million eight-drawer filing cabinets of text and process calculations thousands of times faster than a desktop computer. Researchers can use Arbutus to build online portals or platforms, handle data scraped from the web, run on-demand visualizations or share work with other devices, team members or external collaborators.

Here’s how some Canadian researchers are advancing their work through Compute Canada’s advanced research computing services and infrastructure:

Harnessing the power of scientific cloud computing to fight COVID-19

The University of Victoria and Compute Canada are using the power of Arbutus, Canada’s largest scientific cloud, to analyze the protein structure of the SARS-CoV-2 virus, as part of an international effort to develop drug therapies for COVID-19. The Folding@home project runs protein folding simulations to better understand the disease and find a treatment. Folding@home is the first scientific computing system to break the exascale barrier and is now more powerful than all the Top500 supercomputers in the world combined. Arbutus is currently the fourth highest individual contributor to the global initiative. At SFU, Cedar is also contributing with preemptible batch jobs. Arbutus is a purpose-built research cloud for Canadian researchers, the largest of its kind in the country and can be accessed free of charge for academic research through Compute Canada.

Searching for extraterrestrial lifeforms

Canadian researcher Jason Rowe (interviewed for this issue’s Newsmaker) grabbed headlines in 2014 when he discovered an Earth-like planet using the Kepler Space Telescope. For Rowe, who is now an assistant professor at Bishop’s University and the Canada Research Chair in Exoplanet Astrophysics, discovering and characterizing exoplanets which inhabit distant solar systems has been his life’s work. So far, he’s contributed to the discovery of hundreds of planets. Kepler, which has computers on board that collect transmissions and send them back to Earth, produces an enormous amount of data. And people like Rowe do most of the heavy-duty computation on Earth. To do this math and analysis, they use Canada’s advanced research computing platform coordinated collectively by Compute Canada and its regional partners and member institutions.

Marine microbes reveal the effects of climate change

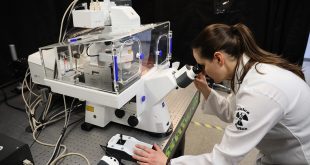

For Julie LaRoche, microbes represent an important indicator of the effects of climate change on ocean life. LaRoche is a biology professor and ocean scientist at Dalhousie University and the Canada Research Chair in Marine Microbial Genomics and Biogeochemistry. These tiny single-celled organisms live in the atmosphere, Earth’s crust, the ocean and our own bodies. LaRoche uses genomics and next-generation sequencing techniques to study the diversity and function of ocean microbes, noting how they are affected by environmental changes. Sequences are entered into a huge database where they are analyzed. “These are big files that take up a lot of memory. We can’t do it on a normal computer, so we have to use ACENET and Compute Canada.”

Designing new molecules in less time

Jason Masuda is building substances that have never been seen on Earth before. Masuda is a chemistry professor at Saint Mary’s University, where he is constructing new molecules. To predetermine how these new substances will react, Masuda uses computing resources from ACENET and Compute Canada to conduct electronic structure calculations using software called Gaussian09. The program helps him predict their volatility. “Gaussian09 is a great tool to save us time. Because we’re pushing the boundaries of what nature allows, quite often I will generate a molecule and get it to optimize on ACENET and then, if it looks promising, we’ll make it in the lab,” Masuda explains. “There’s a saying that two hours in the library saves you two months in the lab. It’s the same with ACENET. Sometimes a couple of hours with Gaussian09 will save you weeks or months in the lab.”

Understanding the mechanisms of life

James Polson spends much of his time trying to understand the mechanisms that make life possible. Polson is an associate professor of physics with the University of Prince Edward Island. He studies polymers, a group of long chainlike molecules composed of many repeated chemical subunits.

Polson uses computer simulations and analytical theoretical methods to study the physical properties of polymers in confined and crowded environments. “We’re carrying out numerically intensive calculations to study model systems under conditions that are relevant to recent experiments using DNA,” he says. As part of his research, Polson uses ACENET and Compute Canada systems to create virtual polymer molecules and simulate their behaviour under different conditions. “For each calculation I need 100 to 200 processors for one to two days, and dozens of such calculations are needed for any given project.”

Monitoring forest health

At the Canadian Forest Service, Senior Research Scientist Mike Wulder and his colleagues have been working with imagery from the Landsat series of satellites – covering almost 1 billion hectares of land, represented by about 10 billion Landsat pixels. Using WestGrid and Compute Canada systems, they have been able to undertake research to capture and label three decades of change in Canada’s forests and are now generating annual maps of land cover for the same 30-year period. Wulder explains, “No longer limited by computing considerations, the algorithms we are developing are increasingly sophisticated, providing new and otherwise unavailable information on Canada’s forested land cover and the disturbances and recovery occurring over the forested land base. Canadian science, monitoring and reporting activities are strengthened by the computing capacity offered by WestGrid, as well as by the cross-institutional collaborative work environment.”

Branching out in apple production

Sean Myles studies the genetic composition of apples and runs a program that tries to accelerate apple breeding. Currently the Canada Research Chair in Agricultural Genetic Diversity and assistant professor in the Faculty of Agriculture at Dalhousie University, Myles’ lab seeks out parts of the apple genome that will lead to winning varieties, helping breeders to screen the offspring of their cross-breeds and boost production. “It’s the same principle as screening for genetic diseases in humans,” Myles says. With a genome that’s 750 million letters long and 1,000 varieties, “You can see how the numbers are starting to climb,” he points out. “And you can’t only sequence each letter once — you have to do it multiple times. That results in a huge amount of data. We have about 10 terabytes of data on the Compute Canada cluster. And that’s just the DNA sequence data.”

BioLab Business Magazine Together, we reach farther into the Canadian Science community

BioLab Business Magazine Together, we reach farther into the Canadian Science community